Building a Data Lakehouse: A Practical Guide

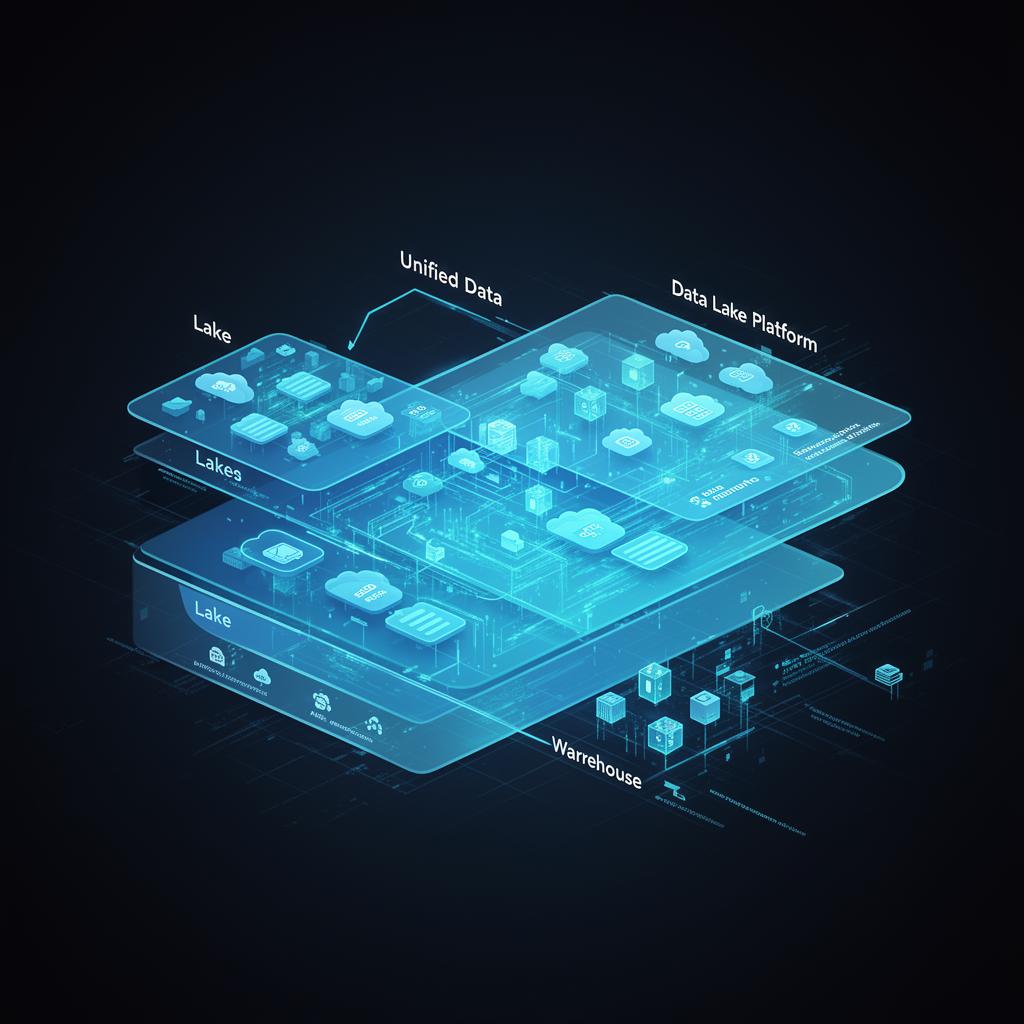

The Data Lakehouse architecture has emerged as a paradigm shift in how organizations manage and analyze their data. By combining the flexibility of data lakes with the performance and reliability of data warehouses, the Lakehouse offers a unified platform for all your data needs.

What is a Data Lakehouse?

A Data Lakehouse is an open architecture that combines the best elements of data lakes and data warehouses. It provides:

- ACID transactions on data lakes

- Schema enforcement and governance

- BI support directly on source data

- Decoupled storage and compute

- Support for diverse data types — structured, semi-structured, and unstructured

Key Components

1. Storage Layer

The foundation of a Lakehouse is an open file format like Delta Lake, Apache Iceberg, or Apache Hudi. These formats bring reliability and performance to your data lake.

2. Metadata Layer

A robust metadata catalog — such as Unity Catalog or Apache Hive Metastore — provides data discovery, lineage, and governance capabilities.

3. Query Engine

Modern query engines like Apache Spark, Presto, or Trino enable fast SQL analytics directly on the lakehouse.

Getting Started

- Assess your current data estate — Understand what data you have and where it lives

- Define your data products — Identify the key data products your organization needs

- Choose your technology stack — Select the right tools for your requirements

- Implement incrementally — Start with high-value use cases and expand

Conclusion

The Data Lakehouse represents the future of data management. By adopting this architecture, organizations can reduce costs, improve data quality, and accelerate time-to-insight.

"The Lakehouse is not just a technology choice — it's a strategic decision that enables a data-centric organization." — StarNET Team